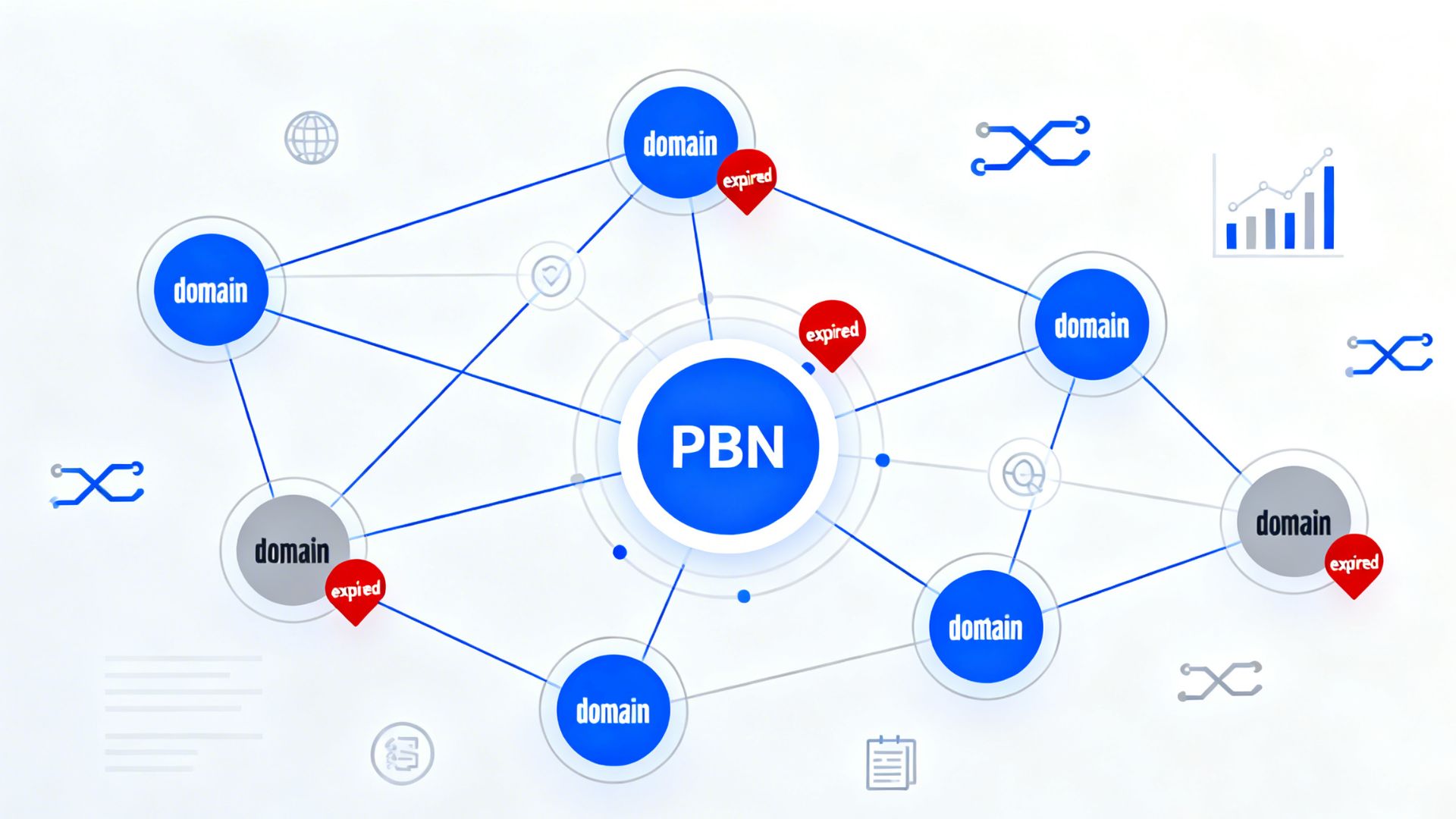

Private Blog Networks, commonly called PBNs, remain one of the most debated tactics in search engine optimization because they attempt to influence rankings through controlled backlinks. Modern search algorithms identify networks through dozens of footprint signals such as shared hosting ranges, identical site structures, and repetitive linking patterns.

Because of this, reducing detectable patterns requires diversification across multiple site elements rather than focusing on a single tactic. This article outlines key strategies for reducing recognizable footprints by diversifying infrastructure, content architecture, technical configurations, and link deployment practices.

Infrastructure Camouflage

Infrastructure diversification is one of the most critical steps in minimizing recognizable network patterns. Search engines frequently analyze IP ranges and hosting relationships to determine whether multiple websites are controlled by the same entity. Distributing sites across more than 15 hosting providers and maintaining varied C-class and B-class IP ranges reduces the likelihood of clustering signals.

Advanced setups often combine offshore hosting providers, independent VPS services, and cloud platforms to increase geographic diversity. Many operators also buy expired domains from different marketplaces to ensure domains originate from varied registrars and ownership histories before deployment. Limiting each provider to a small percentage of the network helps prevent a large portion of sites from sharing the same infrastructure environment.

Using content delivery networks can further mask hosting similarities while improving site performance. Services such as reverse proxies also introduce additional layers between the server and public traffic. The goal of infrastructure camouflage is not secrecy alone but reducing technical patterns that automated systems may correlate.

Visual and Plugin Randomization

Website appearance is another factor that can create recognizable patterns when building multiple sites. Networks that rely on identical themes, page structures, or plugin stacks often produce footprints that can be detected through automated analysis. Using different premium themes and customizing layouts helps reduce visual similarity across properties.

Plugin diversity is equally important because many plugins inject identifiable scripts or HTML structures. Limiting each site to a small set of necessary plugins and varying the combinations helps avoid repeating identical software stacks. Randomizing permalink structures, category systems, and navigation layouts also increases variation.

Technical details such as sitemap formats, robots.txt files, and error page templates should not be identical across the network. Image uploads should also be processed to remove EXIF metadata that could reveal device or editing information. Visual diversity combined with backend variation reduces detectable structural similarities.

Content Architecture Diversity

Content architecture strongly influences how search engines assess a website’s authenticity. Sites built solely for linking often contain thin pages, repetitive structures, or irregular publishing patterns. Reconstructing older site structures using archived references can provide a realistic foundation for new content development.

A common approach involves publishing at least 60 unique articles with varied word counts and formats. Some posts may range from 1500 words to more than 2500 words to create natural variation across the site. Different publishing schedules also help mimic real editorial workflows rather than automated posting patterns.

Outbound linking behavior should reflect normal editorial practices rather than isolated internal linking. Including references to authoritative websites can improve contextual relevance and credibility signals. Distinct author profiles, varied page counts, and different site categories further reinforce architectural diversity.

Technical Footprint Elimination

Beyond visual design and hosting infrastructure, technical configuration can reveal strong network patterns. Search engines can detect similarities in HTML structures, meta tags, and system-generated comments across multiple sites. Removing or modifying default generator tags and template signatures helps reduce these detectable signals.

Server-level configurations should also vary between sites. Custom .htaccess rules can block aggressive scraping while introducing unique server directives for each domain. Analytics implementations, privacy policy pages, and contact forms should not use identical templates or tracking codes.

DNS settings and caching configurations can also reveal infrastructure relationships if they remain identical. Rotating or customizing these settings across sites helps reduce uniform technical fingerprints. Eliminating shared backend characteristics makes it harder for automated systems to associate sites with the same network.

Link Pattern Naturalization

Link placement and anchor text distribution remain some of the most scrutinized signals in search algorithms. Networks that repeatedly link using identical anchor phrases or predictable placements often trigger pattern recognition systems. Contextual links placed naturally within content tend to appear more organic than links in sidebars or footer widgets.

Anchor diversity is another critical factor when distributing backlinks. A balanced distribution that favors branded or partial match anchors reduces the risk of over-optimization signals. Exact match anchors should appear less frequently to avoid unnatural keyword targeting patterns.

Publishing intervals also influence how link activity is interpreted. Gradual link placement over time resembles organic editorial linking more closely than sudden bursts. Limiting the overall contribution of controlled networks to a small percentage of the total backlink profile further reduces detectable influence.

Continuous Monitoring Protocol

Long term network stability depends on consistent monitoring rather than one time configuration. Search environments change frequently as algorithms improve their ability to identify unnatural patterns. Regular analysis helps detect potential footprint signals before they become ranking risks.

Tools used for backlink analysis and search performance monitoring can highlight unusual linking trends or indexing issues. Weekly reviews of link profiles and technical metrics allow early detection of anomalies. Monitoring indexing behavior also helps identify whether certain sites are losing visibility.

Periodic content updates maintain site activity and reduce signals associated with abandoned properties. Some network operators rotate domains or retire underperforming sites to maintain diversity within the overall structure. Continuous evaluation ensures that evolving detection methods are addressed over time.

Conclusion

Reducing detectable patterns across a Private Blog Network requires attention to infrastructure, content structure, technical configuration, and linking behavior. Search engines evaluate these signals collectively rather than in isolation, which makes diversification essential. Networks that repeat the same hosting providers, themes, or linking patterns create strong algorithmic footprints. Consistent monitoring and gradual adjustments help maintain variation across the entire system. Ultimately, minimizing identifiable similarities is the central principle behind maintaining long-term network stability.